Modern touch devices are highly dependent on visual cues. Their smooth surfaces may offer significant room for improvement in usability and user experience by giving the user haptic feedback.

The ultimate goal is a “Haptic Operating System” with a unified “haptic language” for all UI elements and interactions. This familiar additional haptic layer could improve usability for touch devices, perhaps even to the point where looking at a device is no longer always necessary. This will not only make the use of touch devices more comfortable, but in some situations even safer. This project tries to take a first step in this direction by creating a research tool and framework for the investigation of haptic user interfaces.

The “Haptic Explorer” can generate a range of haptic impressions. By slightly vibrating a touch device, it is possible to experiment with the parameters amplitude and frequency and any possible combination of them.

Some interactive pages were created to explore some basic concepts. These ranged from experimenting with haptic feedback for simple shapes to more complex textures. These helped to better understand some of the limitations of the sense of touch in this context and to get feedback from people who have tried it themselves.

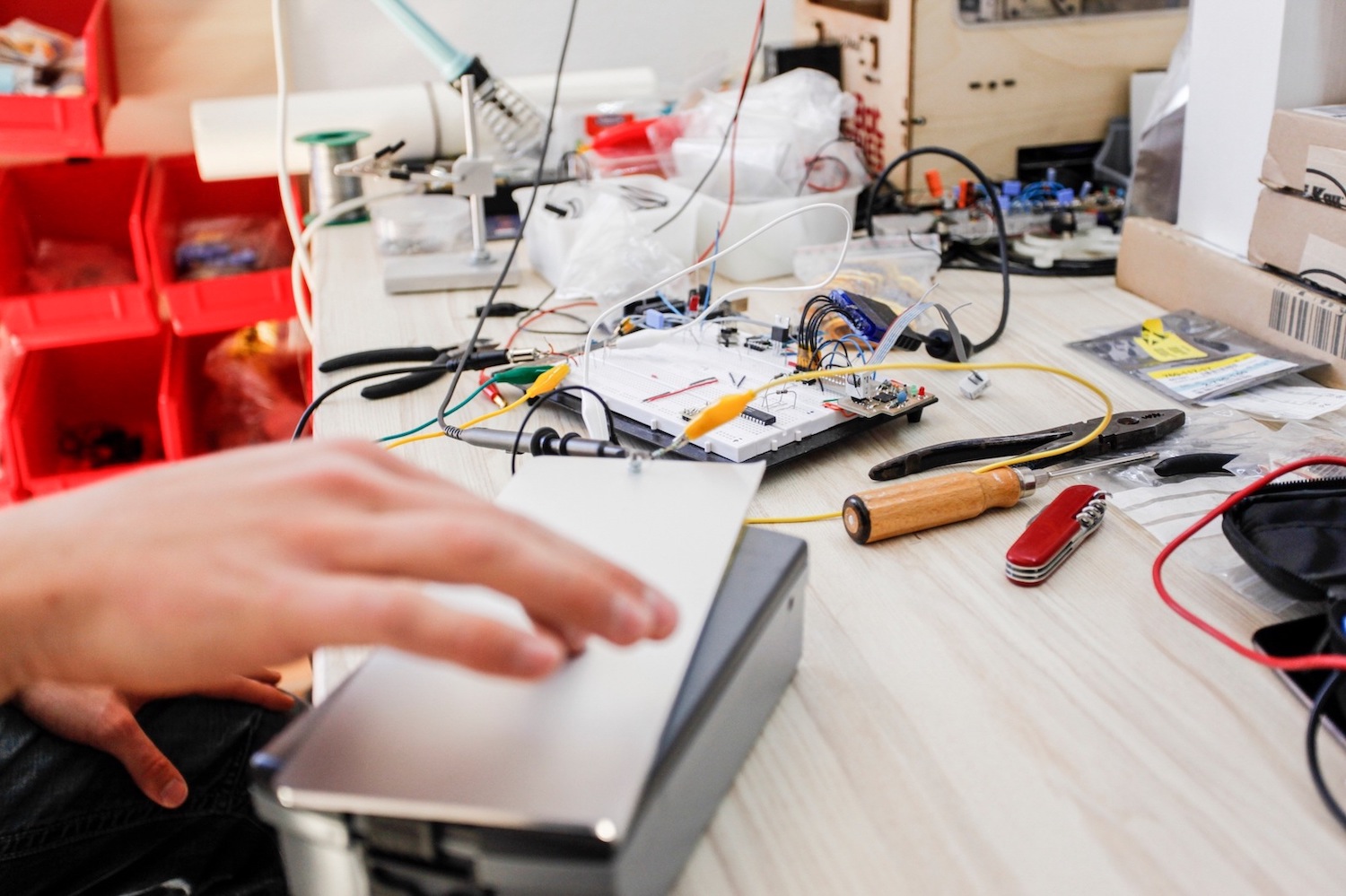

Technical Implementation

There were two technical approaches to providing haptic feedback. The first prototype focused on “Electro Vibrations”. In short, this is a high alternating voltage separated from the finger by a thin insulating layer. Under certain circumstances, this effect can be felt when slightly sliding over devices made of anodized aluminum, such as a MacBook. When the voltage is applied, you feel some drag as the fingers slide across the surface. See for example TeslaTouch.

The anodized aluminum plate used here was not satisfactory for the project. The perception of feedback depended strongly on the thickness of the anodized layer and was only noticeable when sliding with the finger, but not when holding still.

In the next iteration, the second approach was pursued, “Physical Vibrations”. First a vibration motor was tested, but there are less parameters to manipulate and a certain delay when starting and stopping the motor. This was followed by the last iteration, “Auditory Vibrations”. An iPad was physically connected to a speaker, which means that the parameters of the “Electro Vibration” technology are available (frequency and amplitude), combined with a low latency. The frequency spectrum used was low to make it perceptible and as little audible as possible.

The housing of the final prototype keeps everything in place while allowing important parameters to be changed.

The software side was implemented with web technologies. The Web Audio API was used to generate various sounds that the speaker transformed into physically tangible sensations.

Only two months after the completion of this project, Apple announced the first devices with their so-called “Taptic Engine”, which indeed gives haptic feedback to some interface elements and interactions. Surprisingly, this “Taptic Engine” works quite similar to this prototype. It is not yet a “Haptic Operating System”, but it supports the assumption of this project that haptic feedback on touch devices can improve their usability.

This project was part of my interaction design studies at HfG Schwäbisch Gmünd.

Together with Daniel Keller, Maximilian Popp, 3rd semester, “Invention Design”.

Advisor: Prof. Jörg Beck