The exhibition »Superintelligence Control Problem« explores the potential risks of future artificial intelligence. As soon as artificial intelligence surpasses humankind's capabilities, it becomes particularly difficult to control. This is why this so-called artificial super intelligence (ASI) should inherently be oriented towards human values. However, the necessary definition of its goal proves to be a challenge.

The Topic

Current »artificial narrow intelligences« are constantly replacing people in their cognitive tasks in more and more disciplines. This trend will most likely continue, and humanity is and will be confronted with many problems along the way.

»By a ›superintelligence‹ we mean an intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills.«

This project is about the problem we might face with an artificial superintelligence. Current technologies can already cause considerable damage because even now we are not able to explain to a computer what we mean in a broad sense. See the flash crash of 2010 and the social injustice in many of today's machine learning applications. Presently, we can attempt to solve these problems, but with a literally unimaginably intelligent and powerful artificial entity, humanity probably only has one chance to correctly define what we mean. Not to mention the fact that humans could not even agree on a common »what we mean«. In addition, development is mostly driven by the capitalist game, which tends to focus on profit rather than human values.

»The development of full artificial intelligence could spell the end of the human race.«

This bachelor thesis tries to increase the chance of a positive outcome of ASI by increasing the awareness of this control problem.

Research

The initial inspiration came from Tim Urban's blog post The AI Revolution: The Road to Superintelligence on Wait But Why.

»It hit me pretty quickly that what's happening in the world of AI is not just an important topic, but by far THE most important topic for our future.«

Much of the research is based on three books, interviews with professors of science, sociology, machine learning and robotics, and a survey. The books are:

- Nick Bostrom: Superintelligence: Paths, Dangers, Strategies (Superintelligenz Szenarien einer kommenden Revolution)

- Ray Kurzweil: The Age of Intelligent Machines (Das Zeitalter der Künstlichen Intelligenz)

- Ray Kurzweil: The Age of Spiritual Machines (Homo S@piens: Leben im 21. Jahrhundert - Was bleibt vom Menschen?)

The research turned out to be very interesting, but also very challenging. It already starts with the unclear definition of »artificial«, »intelligence«, and you can guess it: »artificial intelligence«. If you dive deeper into the subject, terms like »consciousness« appear, which are even worse. Then there are physiological aspects like the constant underestimation of future technology development and thus the underestimation of this problem. But also »anthropomorphism«, the human ability to apply human-like characteristics to everything, is additionally fueled by many science fiction works. This makes the discussion about a future artificial intelligence that merely follows some instructions and is not inherently »good« or »evil« an even greater challenge.

From a human perspective it may also be difficult to imagine that human level intelligence is not a special milestone or even a limit. Future artificial intelligence can likely improve itself and at some point simply surpass human level intelligence. Because of its increased intelligence, it will be able to improve at an ever increasing rate. This could mean that the appearance of ASI could be sudden and unexpected. There are many aspects and scenarios to consider when dealing with this problem. But in general there are two approaches to control future artificial intelligence. One is to limit the possibilities of a superintelligence, which is probably presumptuous since our abilities pale in comparison to the entity we want to control. The other approach is to accept that we could never be sure to contain it, and to try to align its goals with human values. But even if humanity could agree on universal human values, which, by the way, would be constantly changing, the question remains how such abstract ideas can be formulated and conveyed. There are also more indirect approaches to this problem, none of which can be 100% certain that there is not one tiny flaw that would lead to an existential risk for all of humanity. This project does not want to advocate a single approach, but rather raise awareness of this potentially existential problem, so that this problem is seen as part of the inevitable further development of artificial intelligence. We might only get one chance to get it right.

»The claim is that it is much easier to convince oneself that one has found a solution than it is to actually find a solution.«

To raise awareness, the idea is to directly address people who will be working in any AI-related field in the future. A survey was conducted to get a feeling for how established the topic is in various fields of study. A total of 130 students responded to our survey. They come from 7 universities in Germany and a wide range of study programs such as engineering, computer science, mathematics, neuroscience, philosophy and psychology. Of these, only 7% dealt with the problems of artificial superintelligence during their studies. And 80% did not at all or not intensively cover the topic of AI in class.

The Exhibit

To achieve a lasting effect in the minds of people working in AI-related fields, it was decided to present this topic as a memorable experience in the form of an exhibit. It is designed in a way that no prior knowledge of the topic is necessary to include prospective students. This project was also concerned with the different stakeholders and how our target audience would be made aware of the exhibition. A whole exhibition on this topic was roughly conceived. However, the focus of the remaining project is on the realization of the introduction exhibit. It should generate enthusiasm on an emotional and technical level and inspire people to want to learn more about the topic.

To create a memorable user experience, a robot is the central element. It functions as the symbolic body of an otherwise intangible AI and as a visual link to the topic, which also works well from afar. The robot is also the guide through the narrative and within the narrative it can simultaneously represent the role of AI itself.

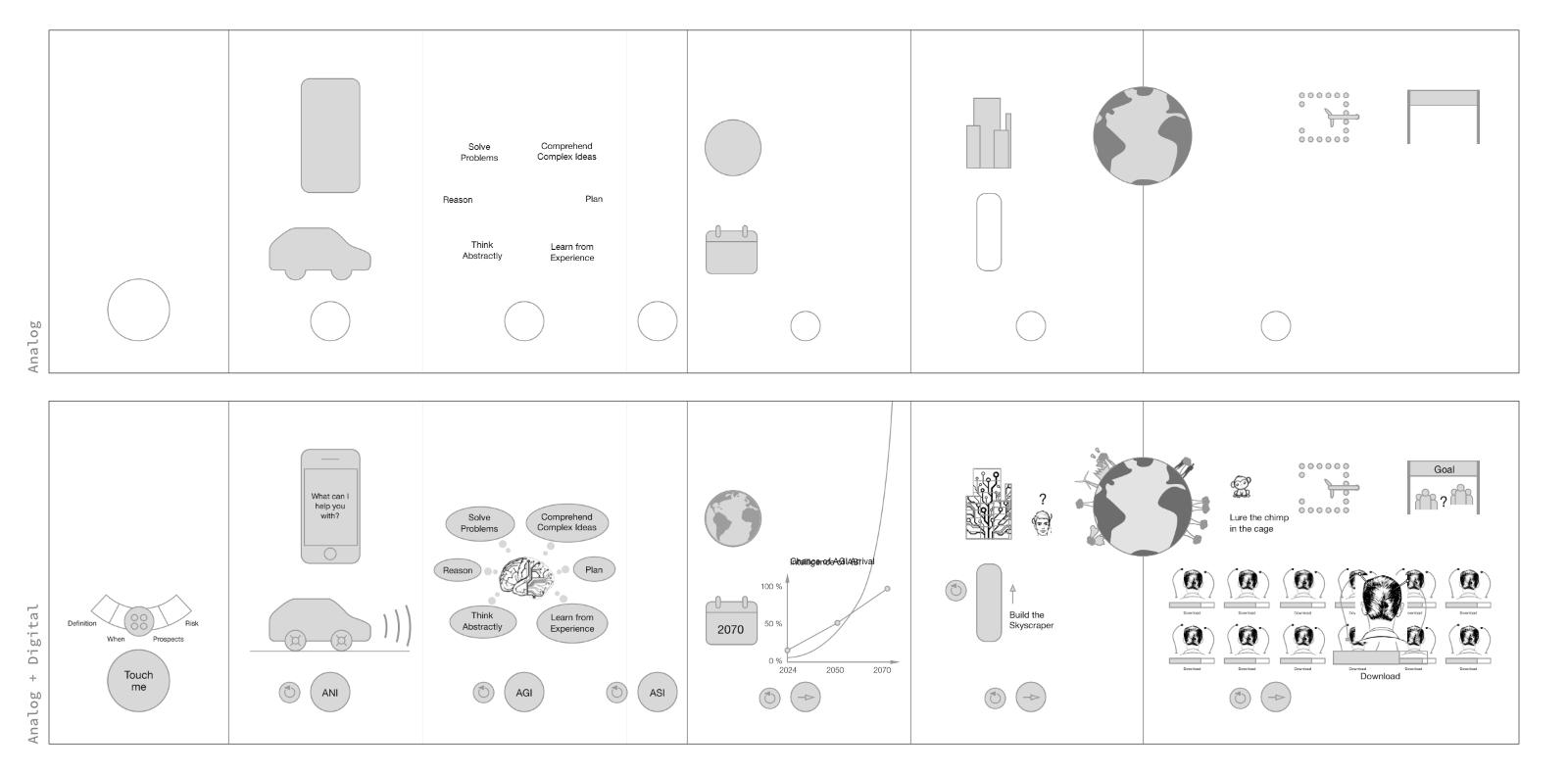

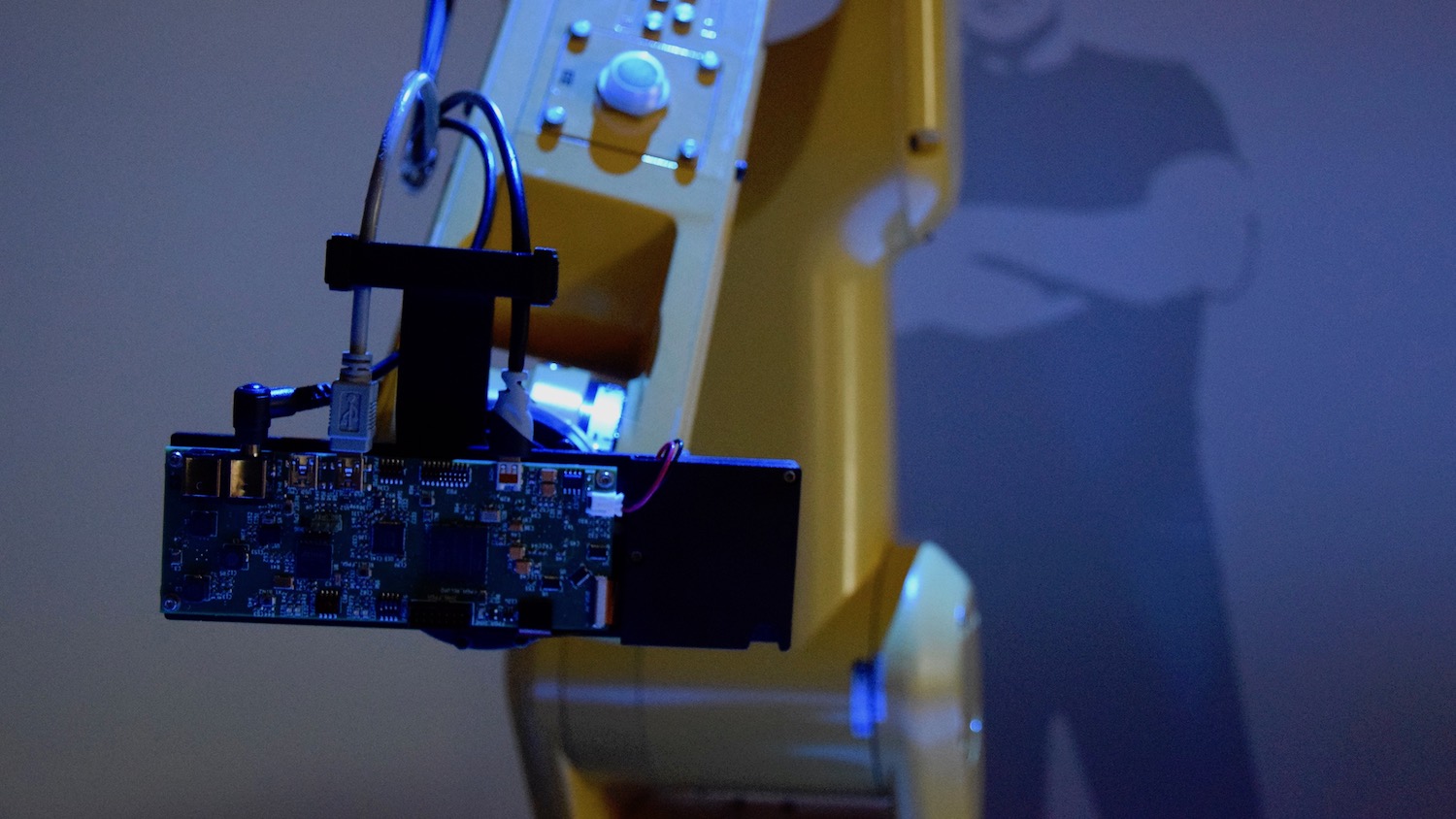

An interactive miniature laser projector is attached to the tip of its arm. This allows a very interesting mixture of analog and digital content. The story is laid out in front of the visitor on an analog desk and is brought to life during the course of the experience with digital live projection mapping. The robot points at an analog piece on the desk, which is thereby digitally augmented while talking about it. By measuring the reflected laser light and some algorithms it is possible to detect fingers and thus interactions within the projection of the laser projector. This is the input and communication interface for the visitors.

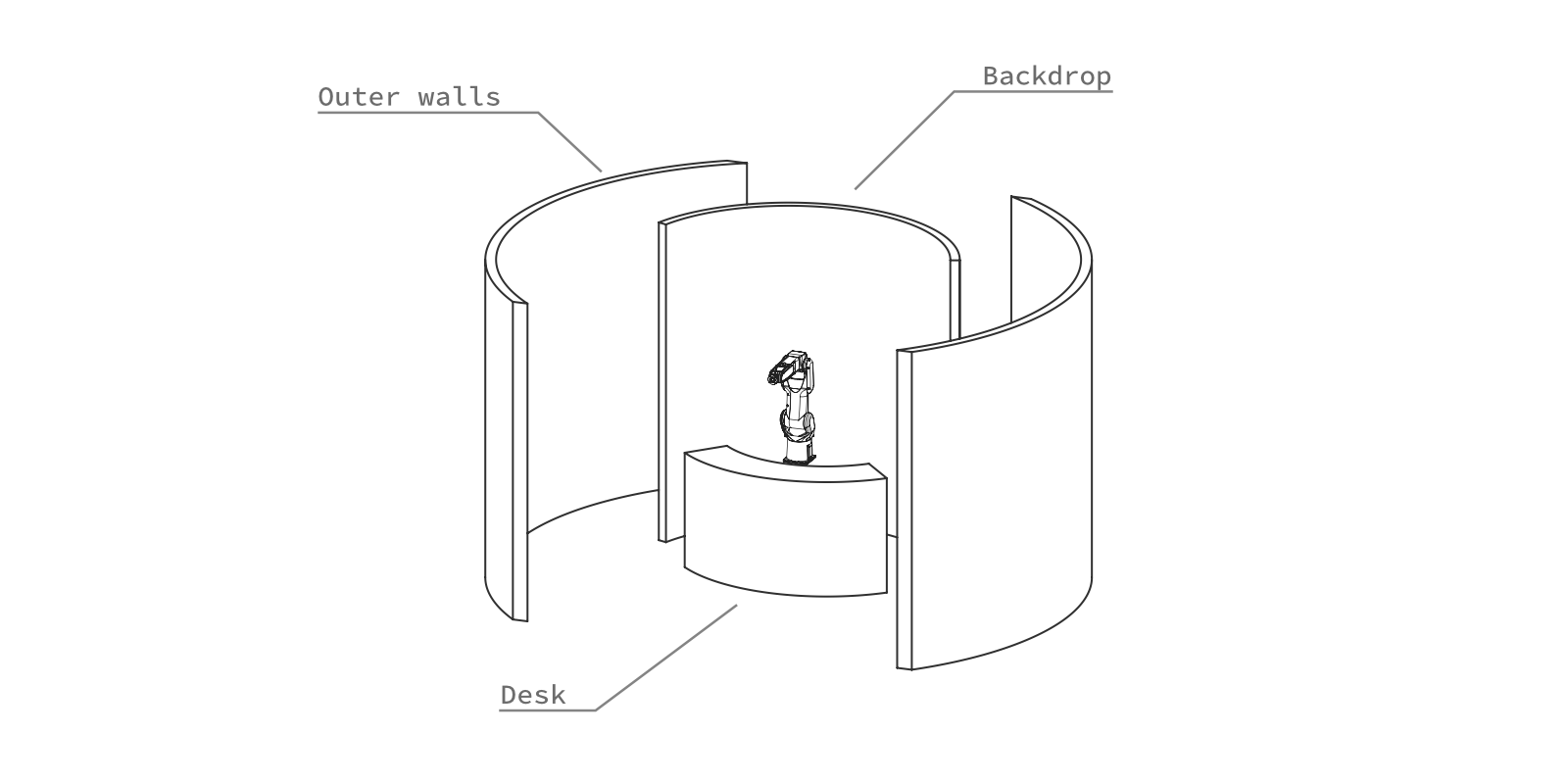

The circular room concept is oriented on the circular motion range of the robot. It allows for an inviting open front, so that the exhibit is easy to see, but still closed enough to affect the atmosphere through light and sound. For example, the robot is illuminated by a projector from the ceiling when the exhibit is idle. It can also be used to illuminate the desk and guide the visitor with light. The shape of the room also incorporates a clear path to the main exhibition, which is located behind the introduction. To further emphasize this, once the introduction has been traversed, the lighting in the room is turned down and the lights at the exits are turned on.

Throughout the exhibition, light, voice and the motion of the robot arm are used to tell the story and guide the visitor. It took a few iterations to create a seamless, consistent and easy to follow narrative. This includes the selection of the proper content, its meaningful arrangement, the use of illustrative examples, the question where which interactions make sense, and the overall visual and auditory concept.

The first main chapter is the introduction, where the visitor is introduced to the interaction principle of the novel interactive laser projector and where it is also possible to jump to a specific chapter. The next part is the definition of terms like artificial narrow and artificial superintelligence. This is followed by an estimation of when this could actually become reality. Then the control problem is illuminated with examples and quotations. To finally show what we should focus more on to approach the control problem. The robot, the AI within the exhibit, ultimately applies the idea of the control problem to itself. What would happen if it were a superintelligence, with the current goal of spreading the knowledge of this exhibition to as many people as possible?

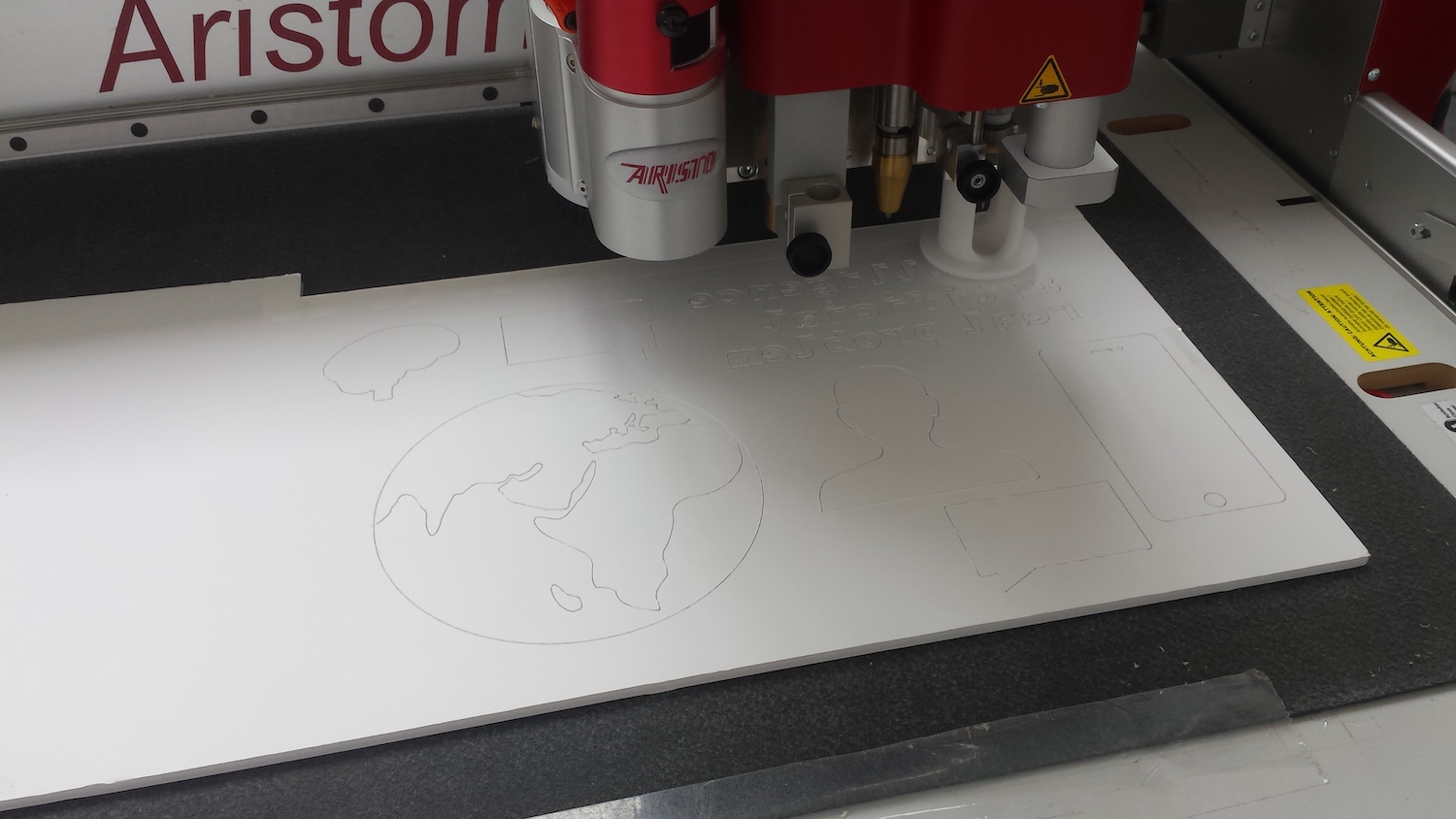

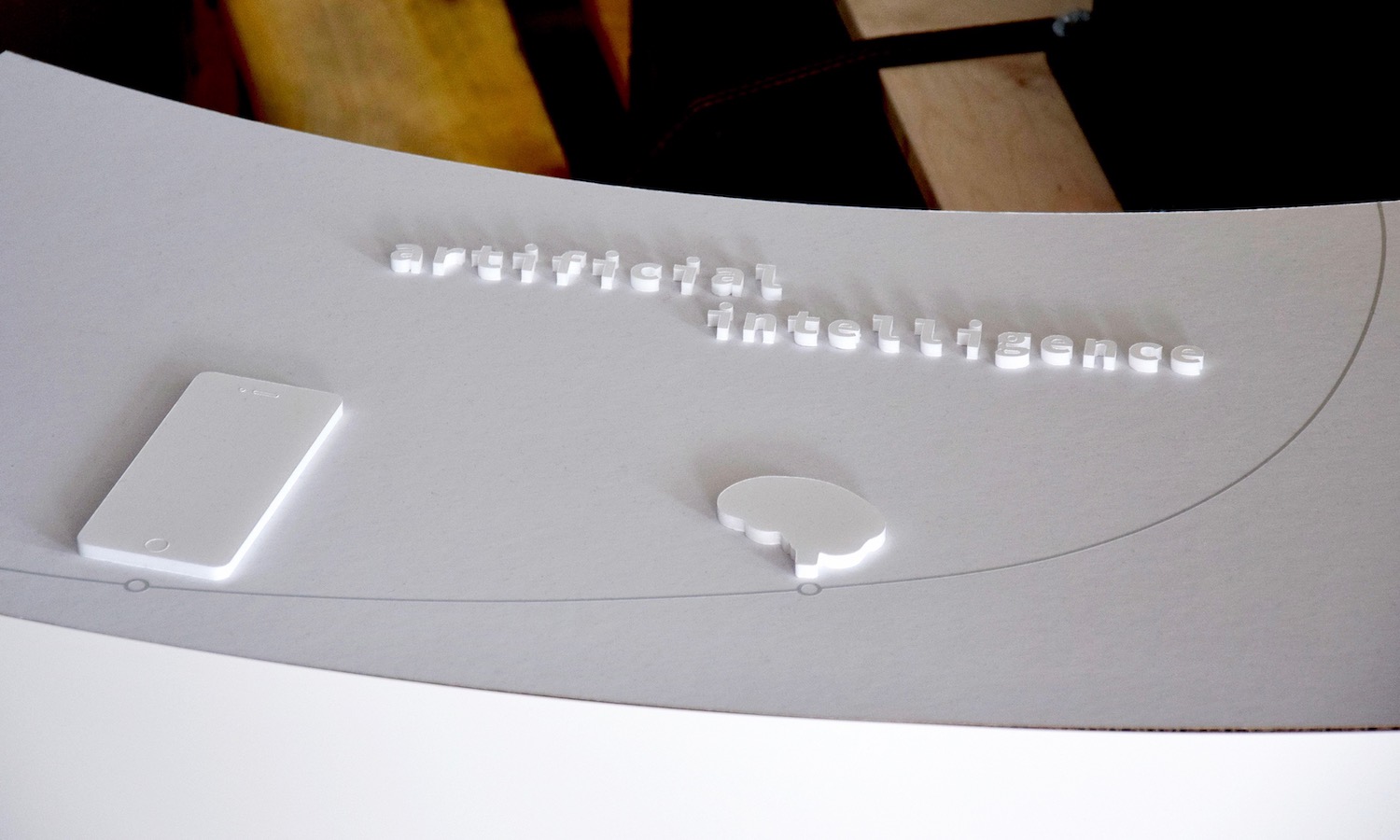

The content on the desk is an interesting interplay between analog and digital. A layout for the physical analog desk landscape and the digital overlay had to be designed. First with very rough »wireframes« to get a feeling for this concept, sizes, placement... And finally a layout and story that has a certain flow and is not just a series of facts. Physical typography and some other objects, which are later digitally augmented, already give a brief overview of the exhibition.

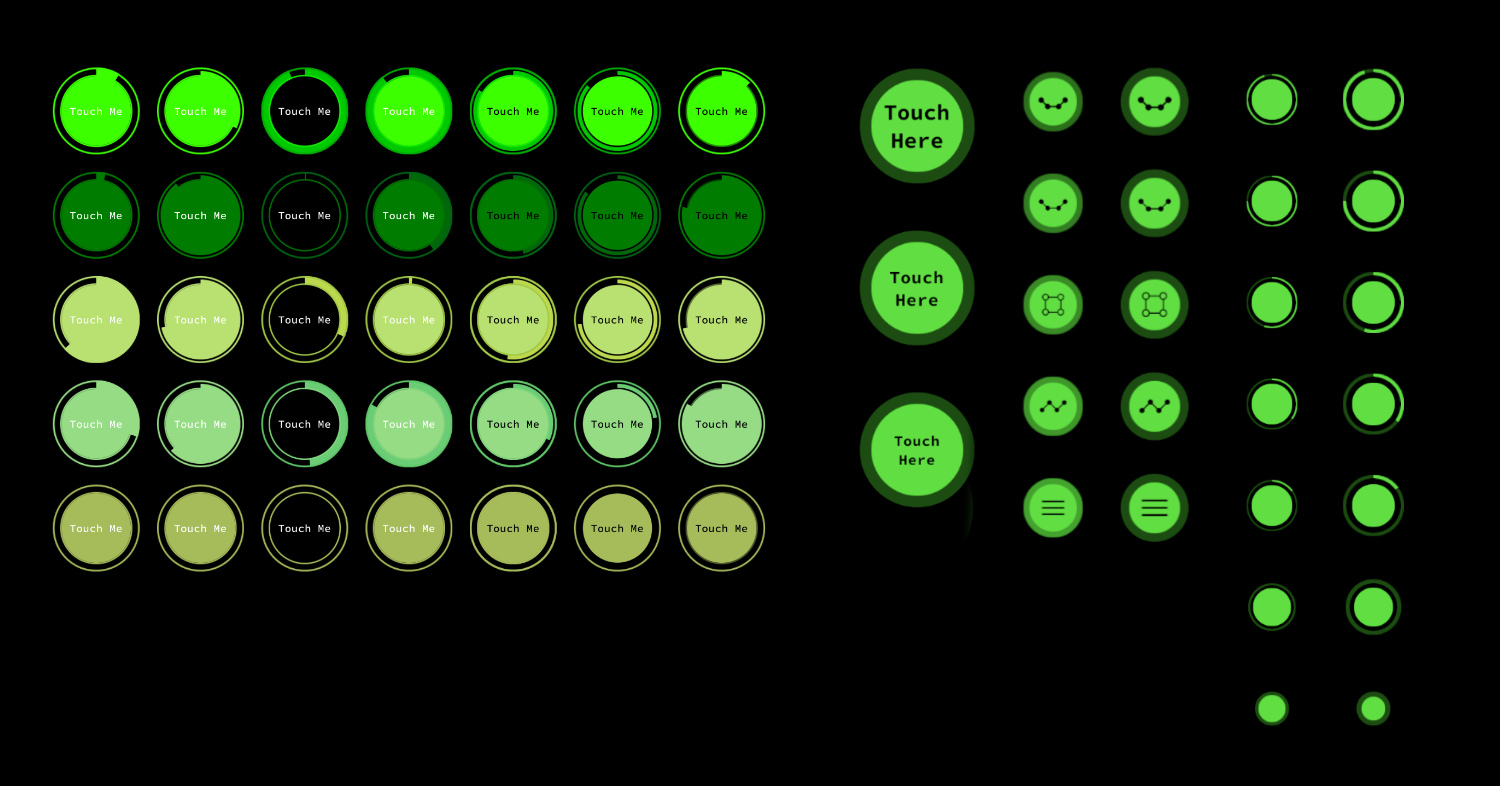

Within the story, the visitor takes the place of humanity, interactions at very specific points are used to reinforce this identification. Where humanity has no control, there will be no interaction or »control« to emphasize our helplessness in the face of the control problem. From a technical point of view, it is not necessary to touch the surface to trigger an input with the laser projector. You can also »touch« the button in the air. In order to detect only the intended interactions, it makes sense for the user to remain briefly on the interactive element to prevent accidental activation. This process has to be visualized and is incorporated as feedback into the design of the buttons. In the final version, the buttons give immediate feedback, as we are used to from touch screens, by getting bigger. Then an arc around this button visualizes the activation progress.

The font used is »Source Code Pro«, it has a technical appearance and thus a visual connection to the topic of software and AI. The color blue was chosen to represent artificial intelligence. We are already used to this color through artistic representations that often use this cold color to represent AI. In contrast to this is the role of humanity, where green was chosen as a color to represent nature, life and hope. Obviously, the colors had to be tested and adapted to be used for the output medium, the laser projector.

The backdrop separates the exhibit from the room behind it, forms the background for the robot and is the place where quotations are displayed. Two life-size silhouettes of Stephen Hawking and Elon Musk are applied to it, stating two of the quotations. They are illuminated while »speaking » and are otherwise more in the background due to their light grey color, which is however still visible from a distance.

The robot's voice is responsible for a large part of the knowledge transfer. It sounds intentionally a bit artificial. The first words when a visitor enters the room are »Welcome Human« to make the roles between visitor and robot or human and machine clear right from the first moment.

Technical Implementation

The desk, the backdrop and the outer walls are made of a wooden skeletons that can be folded for easy assembly and disassembly. The surface of the desk is a white honeycomb cardboard. On its surface are the analog elements made of 5mm foam board. Both were cut on a flatbed cutter. Three large polystyrene sheets are attached on the backdrop skeleton. The silhouettes were plotted on plastic film and applied to the polystyrene sheets.

There are two projectors installed on the ceiling, one is responsible for displaying content on the backdrop. The other one is responsible for the light effects on the robot and the desk and for larger projections, such as when the entire exponential curve is illuminated.

The interactive laser projector from a Bosch research department needed a small holder to attach it to the robot arm. The robot arm and the laser projector were provided by a cooperation with Milla & Partner and Bosch.

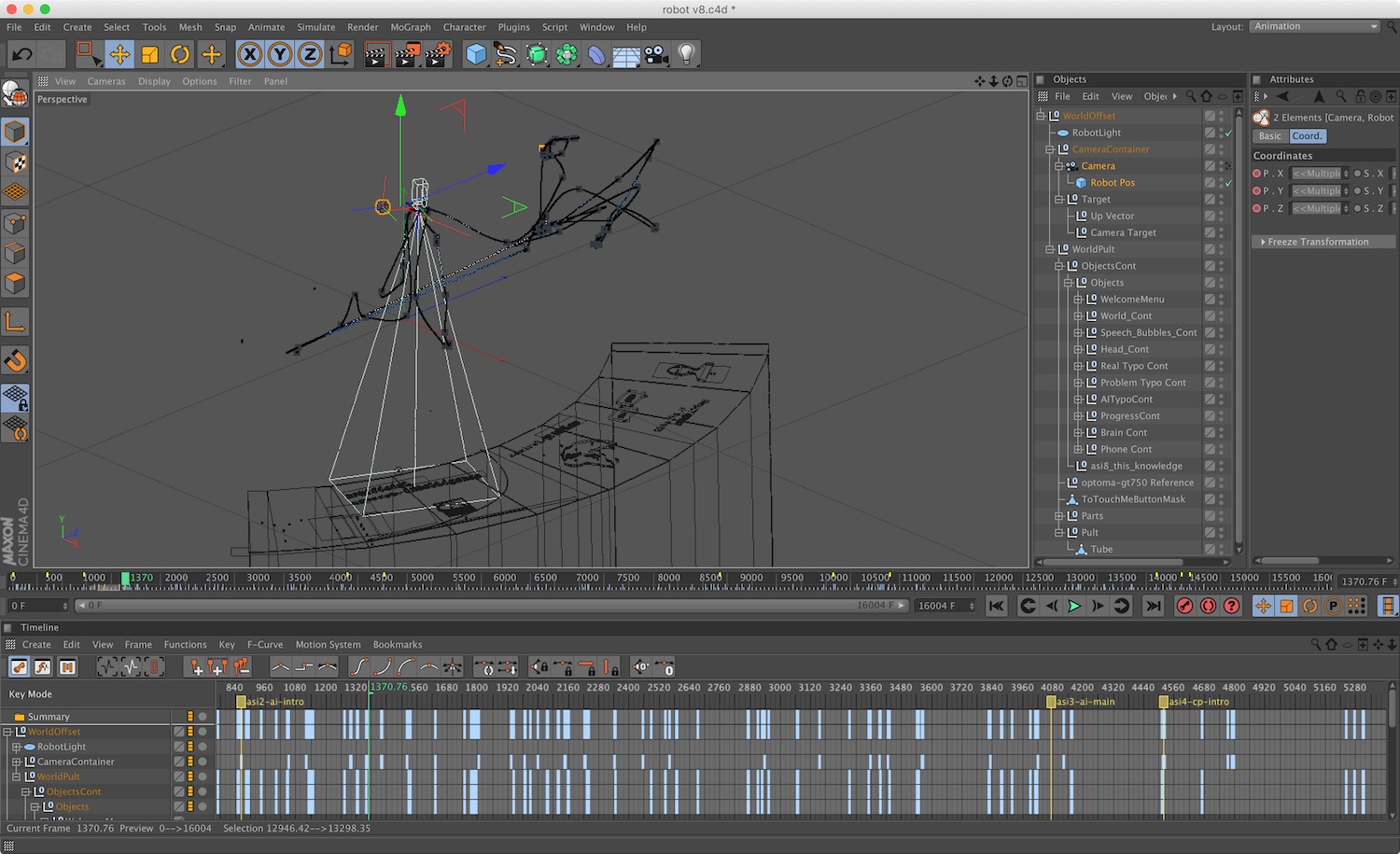

Both the digital and physical kinetic robot movements were animated in Cinema 4D and had to be precisely synchronized with the sound of the robot voice. All virtual cameras and physical projectors had to be calibrated and the analog and digital world had to be perfectly aligned for the complicated live projection mapping to work. From the Cinema 4D animation, a so-called TP program was created, i.e. a program with the motion paths that can be read by the robot.

The actual live rendering was realized with Unity. The animations were imported from Cinema 4D.

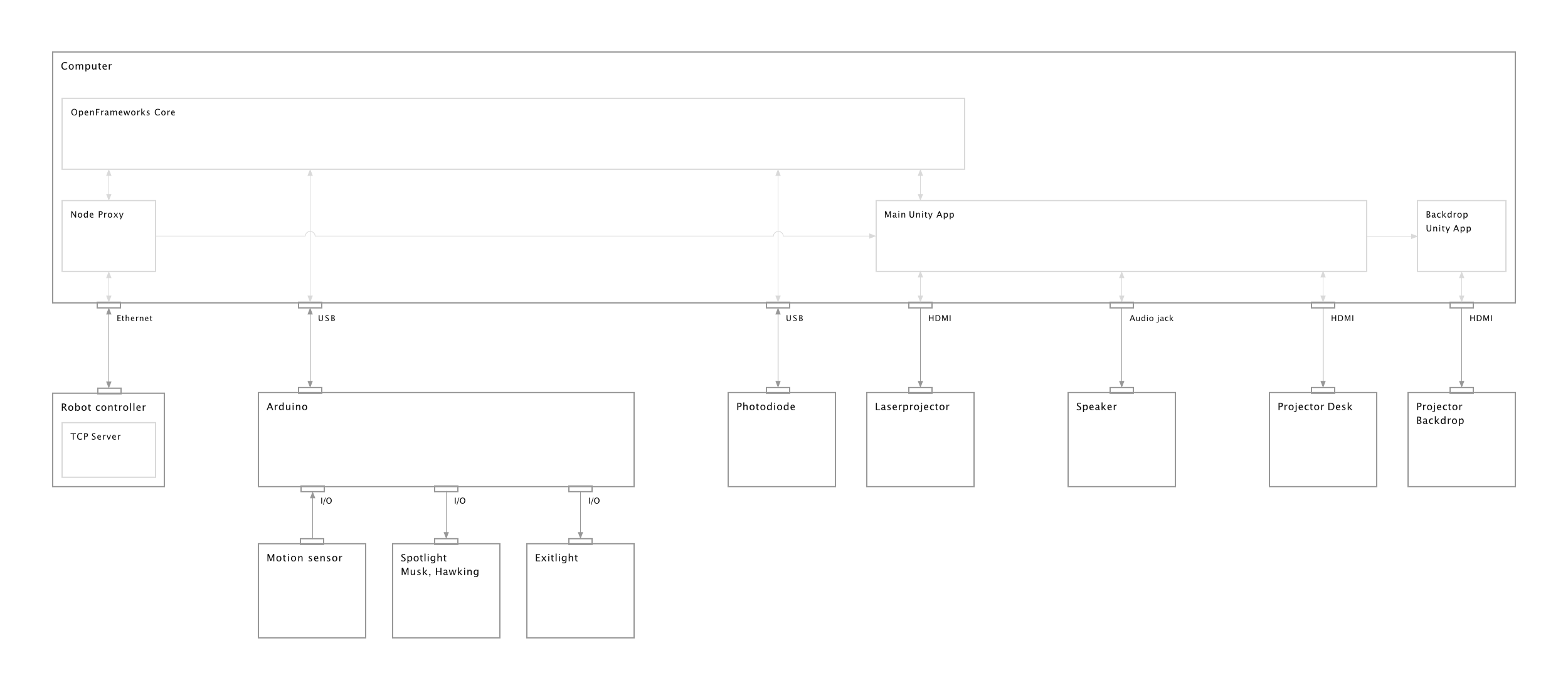

A custom written »TCP server« running on the robot allows to launch programs on the robot and receive events and live position data. During operation, it sends the live position data to a node proxy on a computer, which forwards them to the »OpenFrameworks Core« program and simultaneously to the Unity rendering application. Each projector has its own Unity full screen application rendering live content. The »OpenFrameworks Core« is responsible for the main logic and states. It also communicates with an Arduino, which receives data from a motion sensor and controls a couple of lights in the exhibition. Unity positions the virtual camera with the live position data so that the virtual camera »sees the same thing« as the laser projector in the real world. The digital animation is also controlled by this position data, calculating what percentage of the robot's path has already been covered.

The signals of the photodiode, which measures the light reflected by the laser projector, are processed when an interactive state is encountered. Some image recognition code was written using OpenCV to recognize fingers and touches within this data stream. And within Unity, ray-casting is used to calculate which button is pressed. Visual feedback, the user interface and more complex animations were also programmed within Unity.

Result

This project was part of my interaction design studies at HfG Schwäbisch Gmünd.

Together with Lara Koch, 7th semester, “Bachelor Thesis”.

Prüfer: Prof. Marc Guntow, Betreuer: Prof. Jens Döring